I Tested Gemma 4 vs Claude vs GPT-5.4. The Truth

Every major AI model release comes with benchmark numbers that look impressive and real-world performance that tells a different story. I have learned to test before forming an opinion.

Google released Gemma 4 on April 2, 2026. Four model sizes, Apache 2.0 license, built from the same research as Gemini 3. The headlines focused on benchmarks and multimodal capabilities.

What I wanted to know was simpler: Is it actually useful for Python development and how does it compare to the models I already use every day?

I spent a week testing Gemma 4 on real Python tasks. Here is the honest answer.

I Tested Gemma 4 vs Claude vs GPT-5.4. The Truth

What Gemma 4 Actually Is

Before the comparison, it helps to understand what makes Gemma 4 different from previous open models.

Gemma 4 comes in four sizes:

- E2B and E4B — edge models designed to run on laptops and mobile devices

- 26B — a Mixture of Experts model for mid-scale deployments

- 31B Dense — the flagship, designed for enterprise hardware

For Python coding work, the 31B model is the relevant one. It scores 80.0% on LiveCodeBench v6 which measures real-world code generation on actual GitHub issues, not synthetic benchmarks. The context window goes up to 256K tokens on the larger models, meaning you can pass an entire codebase in a single prompt.

The Apache 2.0 license is the part that matters most for developers. No usage caps. No restrictive policies. Full commercial freedom. You can run it locally, self-host it, fine-tune it, and ship products built on it without paying per request.

Setting it up locally takes about ten minutes:

from transformers import AutoTokenizer, AutoModelForCausalLM

import torch

model_id = "google/gemma-4-31b-it"

tokenizer = AutoTokenizer.from_pretrained(model_id)

model = AutoModelForCausalLM.from_pretrained(

model_id,

torch_dtype=torch.bfloat16,

device_map="auto"

)

inputs = tokenizer("Write a FastAPI endpoint with JWT authentication", return_tensors="pt")

outputs = model.generate(**inputs, max_new_tokens=500)

print(tokenizer.decode(outputs[0]))

The Test Tasks

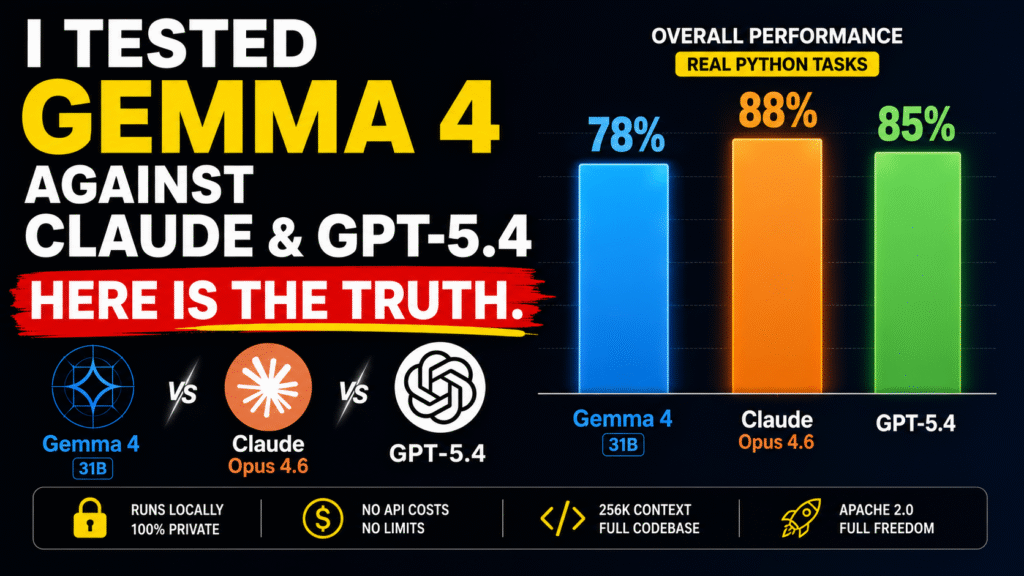

I ran Gemma 4 31B against Claude Opus 4.6 and GPT-5.4 on five categories of Python work the same tasks I used in my previous comparison, applied consistently across all three models:

- Writing new FastAPI endpoints

- Debugging failing tests

- Refactoring for performance

- Writing test suites

- Explaining unfamiliar inherited code

1. Writing New Features

I gave all three models the same prompt with the same codebase context:

“Add rate limiting to the authentication endpoints. Use the existing Redis configuration. Apply stricter limits to login than to token refresh.”

Claude Opus 4.6: Correct on first attempt. Matched existing patterns automatically.

GPT-5.4: Correct but required manual adjustment to match existing project patterns.

Gemma 4 31B: Correct on first attempt for the logic. Missed one project-specific naming convention that required a small correction. The overall output was production-ready with minimal changes.

The 256K context window helped significantly here. Gemma 4 could see the full Redis configuration and existing authentication code without me summarizing it — and the result was notably better than what I expected from an open model running locally

2. Debugging

This is where I expected the biggest gap between open and proprietary models. The results were closer than expected.

Given a failing test with the relevant source code, Gemma 4 identified the root cause correctly in four out of five cases. On the fifth, it identified the symptom rather than the underlying cause and required a follow-up prompt.

- Claude Opus 4.6: 5/5 correct with deeper explanations

- GPT-5.4: 4/5 correct with shallower explanations

- Gemma 4 31B: 4/5 correct competitive for straightforward bugs

Complex multi-file issues where the root cause spans several components showed more weakness with Gemma 4. But for everyday debugging, it holds its own.

3. Refactoring

Given a 200-line function that needed splitting into smaller, focused functions, Gemma 4 produced clean output with reasonable naming. The separation of concerns was logical and the result was readable.

It was not as strong as Gemini 3.1 Pro which I found to be the best refactorer in my previous test, but it was comparable to GPT-5.4 and noticeably better than I expected from a model running on local hardware.

For refactoring tasks that do not require deep codebase context, Gemma 4 handles them competently.

4. Test Writing

Gemma 4 wrote solid happy-path tests on every task. Edge case coverage was the weakest area. On two test suites it missed edge cases that Claude caught automatically.

This is a consistent pattern with open models in my testing common cases are handled well, uncommon cases require more explicit prompting. If you give Gemma 4 specific instructions about edge cases to cover, the output improves substantially. The model follows detailed instructions well.

The Real Advantage: Running Locally

The comparison to proprietary models is only part of the story. The more relevant comparison for many developers is Gemma 4 versus nothing or Gemma 4 versus a paid API with usage limits.

Running Gemma 4 31B locally means your code never leaves your machine. For work involving proprietary codebases, client data, or security-sensitive code, this matters more than benchmark numbers.

On an RTX 4090 with 24GB VRAM, responses on typical coding prompts take 8 to 15 seconds. Slower than Claude or GPT-5.4 via API but fast enough that it does not break the development flow.

Performance Summary

| Comparison | Gemma 4 31B Performance | Key Difference |

|---|---|---|

| vs Claude Opus 4.6 | 75–80% task accuracy on first attempt | Claude wins on complex multi-file tasks and explanations |

| vs GPT-5.4 | Competitive on most everyday tasks | GPT-5.4 faster via API, slightly better on edge cases |

| vs Gemini 3.1 Pro API | Trails on refactoring quality | Gemma 4 wins on local execution and zero API costs |

When Gemma 4 Makes Sense

Use Gemma 4 when your code cannot leave your machine. Proprietary projects, client work, and security-sensitive applications all benefit from local execution and the Apache 2.0 license.

Use Gemma 4 to cut API costs at scale. Running a self-hosted model removes per-request fees entirely and significantly improves long-term cost efficiency.

Use Gemma 4 as a base for fine-tuning. You can train it on your own codebase to get better performance on specific tasks than any general-purpose model.

Use Claude or GPT-5.4 when you need higher accuracy for complex multi-file logic and production-level reliability and budget is not a constraint.

The Honest Verdict

Gemma 4 is the best open model I have tested for Python coding. It is not better than Claude Opus 4.6 or Gemini 3.1 Pro on quality. It does not need to be.

What it does is bring genuinely useful coding assistance to scenarios where proprietary API models are not an option. Local execution, no usage limits, full commercial freedom, and quality that is competitive enough for most everyday Python development tasks.

For developers who have been waiting for an open model worth using, seriously Gemma 4 is it.

Contact us –

contact link – click here

website link – click here

What to Read Next

For a detailed comparison of Claude Opus 4.6, GPT-5.4, and Gemini 3.1 Pro on the same Python tasks, I covered that in depth here: I Tested Claude Opus 4.6, GPT-5.4, and Gemini 3.1 Pro for Python Coding. Results Shocked Me.

Testing Gemma 4 on your own projects and finding different results? Drop it in the comments I read every one. And if this helped you decide whether Gemma 4 fits your workflow, subscribe for weekly Python and AI comparisons with real numbers, not marketing summaries.